“The Trouble with Terminology” is part of a special series of three essays commissioned by Right Click Save from the distinguished computer scientist and son of Harold Cohen, Paul Cohen, dedicated to the language of digital art. Read his other essays “On Creativity in Digital Art” and “Harold Cohen’s Freehand Line Algorithm”.

Examples of troublesome words include aesthetic, autonomy, collaboration, creativity, emergence, generative, intention, meaning, originality, personality, style, and so on. Oh boy, here comes trouble.

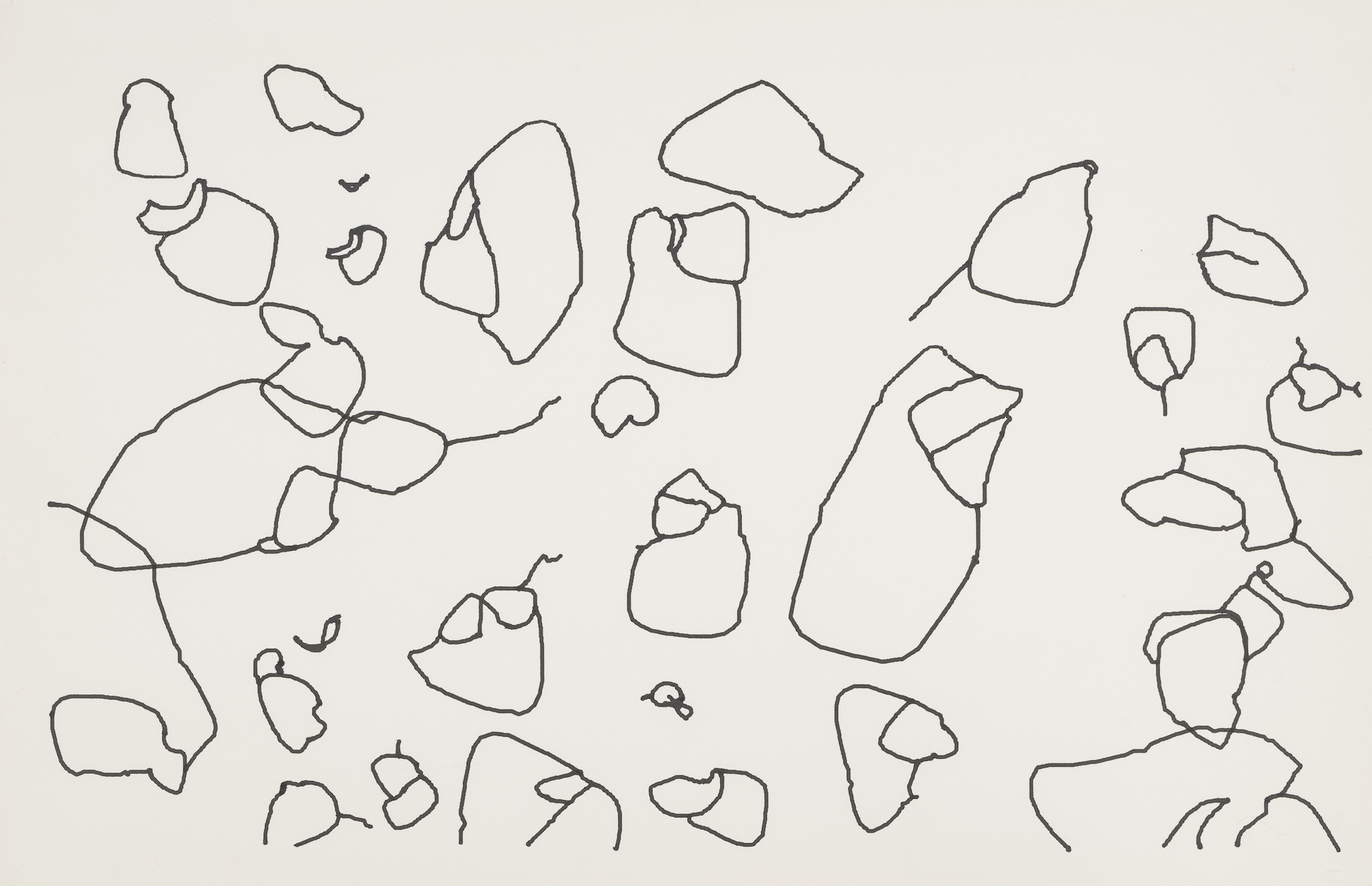

.jpg)

Artists who work with computers are the most qualified people to propose computational accounts of aesthetics, creativity, intentionality, and other phenomena denoted by troublesome words. Here’s how: when you use a troublesome word, define it in terms of the objectively observable behaviors of your system.

For some reason, human-machine interactions are described in the language of enchantment and mystery.

As a result of objectively described constraints and developments, AARON — no longer an emulation, now an expert — became quite autonomous of Harold with respect to color. That’s how a “relationship” between an artist and an art-making program should be presented.

Perhaps this human-machine relay is what all artists mean by “collaboration”. More likely, artists have quite different interpretations of the word. We won’t know unless artists say what they and their programs do.

The issue here is not whether machines have mental states. That debate grows more complicated by the day. The issue is whether we can find a stance, a way to use mentalistic language, that advances our understanding of digital art.

Note that the constructive stance does not say that computational boldness is identical to human boldness, any more than AARON’s color expertise was identical to Cohen’s.

With thanks to Alex Estorick, who conceived, commissioned, and edited this series.

Paul Cohen is a professor of Computer Science at the University of Pittsburgh and the CEO of Causerie.AI, which extracts knowledge from text at scale. Prior to becoming the Founding Dean of the School of Computing and Information at Pitt in 2017, he was a program manager in DARPA’s Information Innovation Office, where he designed and managed the Big Mechanism, Communicating with Computers, and World Modelers programs. He worked at DARPA under an IPA agreement with the University of Arizona, where he founded the School of Information: Sciences, Technology and Arts, now the School of Information. His research is in aspects of artificial intelligence and cognitive science, with interest in how language, communication, and AI methods can foster understanding of highly complicated systems such as cell signaling pathways, biophysical, and socio-economic systems. He is the son of the artist Harold Cohen.

___

¹ H Cohen, “Driving the Creative Machine”, Paper presented at Orcas Center, Crossroads Lecture Series, September, 2010, 9.

² H Cohen, “AARON, Colorist: from Expert System to Expert”, Paper presented at University of California, San Diego, October, 2006, para. 47.

³ H Cohen, “Driving the Creative Machine”, 8.

⁴ DC Dennett, “Intentional Systems”, The Journal of Philosophy, Vol. 68, no. 4, February 25, 1971.

⁵ “[T]he definition of intentional systems I have given does not say that [they] really have beliefs and desires, but that one can explain and predict their behavior by ascribing beliefs and desires to them…” Ibid., 195.